I recently wasted about two days to bring up a simple site-to-site IPsec VPN tunnel between a Cisco ASA and Cisco FTD and a Linux machine running strongSwan and using digital certificates to authenticate the peers. The configuration was simple but due to a little “detail” and to a lack of good debugging information on the Cisco ASA/FTD, what should have been a five-minute job ended up taking a couple of days of troubleshooting, looking at the strongSwan source code, and making configuration changes to try to make it work. In the end I was able to bring up the tunnel and I got to the bottom of what the Cisco ASA/FTD was not liking from what strongSwan was sending. I will document here the configurations, and finally, at the end, will show what the Cisco ASA/FTD was choking on.

Cisco ASA Configuration

The basic VPN configuration on the Cisco ASA side looks like this:

access-list traffic-to-encrypt extended permit ip 10.123.0.0 255.255.255.0 10.123.1.0 255.255.255.0

!

crypto ipsec ikev2 ipsec-proposal IPSEC-PROPOSAL

protocol esp encryption aes-256

protocol esp integrity sha-256 sha-1

!

crypto map MYMAP 10 match address traffic-to-encrypt

crypto map MYMAP 10 set peer 10.118.57.149

crypto map MYMAP 10 set ikev2 ipsec-proposal IPSEC-PROPOSAL

crypto map MYMAP 10 set trustpoint TRUSTPOINT chain

!

crypto map MYMAP interface outside

!

crypto ca trustpoint TRUSTPOINT

revocation-check crl

keypair TRUSTPOINT

crl configure

policy static

url 1 http://www.chapus.net/ChapulandiaCA.crl

!

crypto ikev2 policy 10

encryption aes-256

integrity sha256

group 14

prf sha

lifetime seconds 86400

!

crypto ikev2 enable outside

!

tunnel-group 10.118.57.149 type ipsec-l2l

tunnel-group 10.118.57.149 ipsec-attributes

ikev2 remote-authentication certificate

ikev2 local-authentication certificate TRUSTPOINT

Note that the FTD configuration is very similar, but it has to be performed via the Firepower Management Center (FMC) GUI. In fact, after doing the configuration via FMC one can log into the FTD CLI using SSH and run the command “show running-config” and see the same configuration shown above for the ASA.

strongSwan Configuration (ipsec.conf)

The ipsec.conf configuration file (typically located at /etc/ipsec.conf) is the old way of configuring the strongSwan IPsec subsystem. The following ipsec.conf file contents allowed the tunnel to come up with no problems:

config setup

strictcrlpolicy=yes

cachecrls = yes

ca MyCA

crluri = http://www.example.com/MyCA.crl

cacert = ca.pem

auto = add

conn %default

ikelifetime=60m

keylife=20m

rekeymargin=3m

keyingtries=1

keyexchange=ikev2

mobike=no

conn net-net

leftcert=rpi.pem

leftsubnet=10.123.1.0/24

leftfirewall=yes

right=10.122.109.113

rightid="C=US, ST=CA, L=SF, O=Acme, OU=CSS, CN=asa, E=admin@example.com"

rightsubnet=10.123.0.0/24

auto=add

In addition to the ipsec.conf file, the ipsec.secrets (typically /etc/ipsec.secrets) also has to be edited, in this case to indicate the name of the private RSA key. Our ipsec.secrets file looks like this:

# ipsec.secrets - strongSwan IPsec secrets file

: RSA mykey.pem

Finally, certain certificates and the RSA key must be placed (all in PEM format) in certain directories under /etc/ipsec.d:

The Linux machine’s identity certificate goes into /etc/ipsec.d/cert/. strongSwan automatically loads that certificate upon startup

The Certification Authority (CA) root certificate goes into /etc/ipsec.d/cacerts/

The private key must be placed in /etc/ipsec.d/private/

strongSwan Configuration (swanctl.conf)

swanctl.conf is a new configuration file that is used by the swanctl(8) tool to load configurations and credentials into the strongSwan IKE daemon. This is the “new” way to configure the strongSwan IPsec subsystem. The configuration file syntax is very different, though the parameters that need to be set to be able to bring up the IPsec tunnel are the same as in the case of the ipsec.conf-based configuration.

A swanctl.conf-based configuration is more modular. Configuration files typically exist under /etc/swanctl/. For our specific connection, we put the configuration in the file /etc/swanctl/conf.d/example.conf, which gets included from /etc/swanctl/swanctl.conf. Our /etc/swanctl/example.conf file contains the following:

connections {

# Section for an IKE connection named .

my-connection {

# IKE major version to use for connection.

version = 2

# Remote address(es) to use for IKE communication, comma separated.

# remote_addrs = %any

remote_addrs = 10.122.109.113

# Section for a local authentication round.

local-1 {

# Comma separated list of certificate candidates to use for

# authentication.

certs = rpi.pem

}

children {

# CHILD_SA configuration sub-section.

my-connection {

# Local traffic selectors to include in CHILD_SA.

# local_ts = dynamic

local_ts = 10.123.1.0/24

# Remote selectors to include in CHILD_SA.

# remote_ts = dynamic

remote_ts = 10.123.0.0/24

}

}

}

}

# Section defining secrets for IKE/EAP/XAuth authentication and private key

# decryption.

secrets {

# Private key decryption passphrase for a key in the private folder.

private-rpikey {

# File name in the private folder for which this passphrase should be

# used.

file = rpi.pem

# Value of decryption passphrase for private key.

# secret =

}

}

# Section defining attributes of certification authorities.

authorities {

# Section defining a certification authority with a unique name.

MyA {

# CA certificate belonging to the certification authority.

cacert = myca.pem

# Comma-separated list of CRL distribution points.

crl_uris = http://www.chapus.net/ChapulandiaCA.crl

}

}

Bringing Up the Tunnel on Interesting Traffic

To bring up the tunnel when “interesting” traffic is received it is necessary to use the “start_action” configuration parameter. Otherwise the IPsec tunnel has to be brought up manually using the swanctl –initiate xxxxx command.

Here’s an example configuration that uses “start_action”:

connections {

# Section for an IKE connection named <conn>.

lab-vpn {

version = 2

remote_addrs = 10.1.10.114

local-1 {

certs = rpi.pem

}

children {

# CHILD_SA configuration sub-section.

lab-vpn {

local_ts = 10.123.1.0/24, 10.10.0.0/16

remote_ts = 10.123.0.0/24

start_action = trap

}

}

}

}

Automatically Starting Charon

The charon-systemd daemon implements the IKE daemon very similar to charon, but is specifically designed for use with systemd. It uses the systemd libraries for a native integration and comes with a simple systemd service file.

In vesions of strongSwan prior to 5.8.0 one needed to enable the systemd service “strongswan-swanctl”. In versions 5.8.0 and later it is now “strongswan”.

To start the charon-systemd daemon when the system boots just use systemctl to enable the service:

systemctl enable strongswan

Reference: https://wiki.strongswan.org/projects/strongswan/wiki/Charon-systemd

Issues

There were three serious issues that I ran into when trying to bring up the site to site tunnel. All of them appear to be bugs.

Cisco ASA/FTD Unable to Process Downloaded CRL When Cisco WSA in the Middle

In this issue the Cisco ASA/FTD is apparently unable to parse a downloaded CRL when a Cisco WSA proxy server is transparently in the middle. The Cisco WSA is returning the file to the Cisco ASA/FTD but the ASA apparently does not like something in the HTTP headers (the “Via” header? I don’t know). There is nothing wrong with the CRL itself — I performed a packet capture on the ASA itself, extracted the CRL file from the packet capture, and it is not corrupted or anything. In fact, I have seen the revocation check work sometimes; I believe the problem occurs when the CRL is present in the WSA’s cache, which would explain why it works sometimes. I configured the web server hosting the CRL to prevent caching of the file but the problem still persists.

Workaround for this problem: Configure the ASA to fallback to no revocation check, i.e.

crypto ca truspoint X

revocation-check crl none

PRF Algorithms Other Than SHA1 Do Not Work

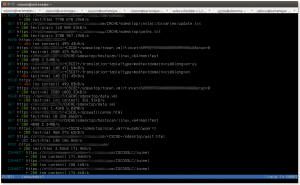

No idea if the problem here is on the Cisco ASA/FTD side or on the strongSwan side. All I know is that strongSwan fails to authenticate the peer. I see these messages in the strongSwan logs:

[ENC] parsed IKE_AUTH response 1 [ V IDr CERT AUTH SA TSi TSr N(ESP_TFC_PAD_N) N(NON_FIRST_FRAG) N(MOBIKE_SUP) ]

[IKE] received end entity cert ""

[CFG] using certificate ""

[CFG] using trusted ca certificate ""

[CFG] checking certificate status of ""

[CFG] using trusted certificate ""

[CFG] crl correctly signed by ""

[CFG] crl is valid: until Jan 06 02:12:01 2018

[CFG] using cached crl

[CFG] certificate status is good

[CFG] reached self-signed root ca with a path length of 0

[IKE] signature validation failed, looking for another key

[CFG] using certificate ""

[CFG] using trusted ca certificate ""

[CFG] checking certificate status of ""

[CFG] using trusted certificate ""

[CFG] crl correctly signed by ""

[CFG] crl is valid: until Jan 06 02:12:01 2018

[CFG] using cached crl

[CFG] certificate status is good

[CFG] reached self-signed root ca with a path length of 0

[IKE] signature validation failed, looking for another key

[ENC] generating INFORMATIONAL request 2 [ N(AUTH_FAILED) ]

[NET] sending packet: from 10.118.57.151[4500] to 10.122.109.113[4500] (80 bytes)

initiate failed: establishing CHILD_SA 'css-lab' failed

Workaround for this problem: Use SHA-1 as the PRF. For example, on the ASA, one could use:

crypto ikev2 policy 10

encryption aes-256

integrity sha256

group 19

prf sha

Certificates Using ASN.1 “PRINTABLESTRING” Don’t Work on Cisco ASA/FTD

This one was very difficult to troubleshoot. It might be a bug on the strongSwan side but I am not sure. The issue is that, depending on configuration, strongSwan will use as IKEv2 identity to send to the Cisco ASA/FTD a Distinguished Name (DN) in binary ASN.1 encoding, but when it creates this binary ASN.1 encoding it will use the type “PRINTABLESTRING” instead of “UTF8STRING” to represent fields like Country, stateOrProvince, localityName, organizationName, commonName, etc. The IKEv2 identity is otherwise identical to the identity that strongSwan would obtain directly from the certificate.

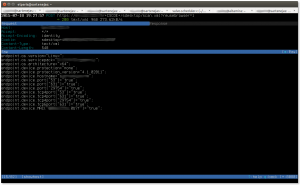

On the ASA/FTD side, when the ASA/FTD receives an identity that uses fields of type “PRINTABLESTRING” it seems to consider the identity bad, and it chokes. This is made difficult to troubleshoot by the fact that there apparently are no good debug messages to see what is going on. On a bad case one sees these messages:

%ASA-7-711001: IKEv2-PLAT-3: RECV PKT [IKE_AUTH] [10.118.57.149]:500->[10.122.109.113]:500 InitSPI=0x596a08fccb72412a RespSPI=0x5d757649514ab5e8 MID=00000001

%ASA-7-711001: (34):

%ASA-7-711001: IKEv2-PROTO-2: (34): Received Packet [From 10.118.57.149:500/To 10.122.109.113:500/VRF i0:f0]

[...]

%ASA-7-711001: IDr%ASA-7-711001: Next payload: CERT, reserved: 0x0, length: 128

%ASA-7-711001: Id type: DER ASN1 DN, Reserved: 0x0 0x0

%ASA-7-711001:

%ASA-7-711001: 30 76 31 0b 30 09 06 03 55 04 06 13 02 55 53 31

%ASA-7-711001: 0b 30 09 06 03 55 04 08 13 02 4e 43 31 0c 30 0a

%ASA-7-711001: 06 03 55 04 07 13 03 52 54 50 31 0e 30 0c 06 03

%ASA-7-711001: 55 04 0a 13 05 43 69 73 63 6f 31 0c 30 0a 06 03

%ASA-7-711001: 55 04 0b 13 03 43 53 53 31 0c 30 0a 06 03 55 04

%ASA-7-711001: 03 13 03 72 70 69 31 20 30 1e 06 09 2a 86 48 86

%ASA-7-711001: f7 0d 01 09 01 16 11 65 6c 70 61 72 69 73 40 63

%ASA-7-711001: 69 73 63 6f 2e 63 6f 6d

[...]

%ASA-7-711001: IKEv2-PROTO-5: (34): SM Trace-> SA: I_SPI=596A08FCCB72412A R_SPI=5D757649514AB5E8 (I) MsgID = 00000001 CurState: I_WAIT_AUTH Event: EV_RECV_AUTH

%ASA-7-711001: IKEv2-PROTO-5: (34): Action: Action_Null

%ASA-7-711001: IKEv2-PROTO-5: (34): SM Trace-> SA: I_SPI=596A08FCCB72412A R_SPI=5D757649514AB5E8 (I) MsgID = 00000001 CurState: I_PROC_AUTH Event: EV_CHK4_NOTIFY

%ASA-7-711001: IKEv2-PROTO-2: (34): Process auth response notify

%ASA-7-711001: IKEv2-PROTO-5: (34): SM Trace-> SA: I_SPI=596A08FCCB72412A R_SPI=5D757649514AB5E8 (I) MsgID = 00000001 CurState: I_PROC_AUTH Event: EV_PROC_MSG

%ASA-7-711001: IKEv2-PLAT-2: (34): peer auth method set to: 1

%ASA-7-711001: IKEv2-PROTO-5: (34): SM Trace-> SA: I_SPI=596A08FCCB72412A R_SPI=5D757649514AB5E8 (I) MsgID = 00000001 CurState: I_WAIT_AUTH Event: EV_RE_XMT

%ASA-7-711001: IKEv2-PROTO-2: (34): Retransmitting packet

%ASA-7-711001: (34):

%ASA-7-711001: IKEv2-PROTO-2: (34): Sending Packet [To 10.118.57.149:500/From 10.122.109.113:500/VRF i0:f0]

As can be seen, the state machine goes from I_WAIT_AUTH (wait for authentication payload) to I_PROC_AUTH (process authentication payload), receives an “EV_PROC_MSG” (process message event), and then goes back to the I_WAIT_AUTH state with a retransmit (EV_RE_XMT) event. There is not explanation or message that indicates why process the IKEv2 identity failed.

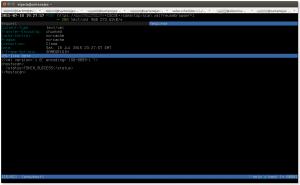

In the good case, when see messages like:

%ASA-7-711001: IKEv2-PROTO-5: (35): SM Trace-> SA: I_SPI=03542332E12C42F4 R_SPI=3E95373B6C8C25AF (I) MsgID = 00000001 CurState: I_WAIT_AUTH Event: EV_RECV_AUTH

%ASA-7-711001: IKEv2-PROTO-5: (35): Action: Action_Null

%ASA-7-711001: IKEv2-PROTO-5: (35): SM Trace-> SA: I_SPI=03542332E12C42F4 R_SPI=3E95373B6C8C25AF (I) MsgID = 00000001 CurState: I_PROC_AUTH Event: EV_CHK4_NOTIFY

%ASA-7-711001: IKEv2-PROTO-2: (35): Process auth response notify

%ASA-7-711001: IKEv2-PROTO-5: (35): SM Trace-> SA: I_SPI=03542332E12C42F4 R_SPI=3E95373B6C8C25AF (I) MsgID = 00000001 CurState: I_PROC_AUTH Event: EV_PROC_MSG

%ASA-7-711001: IKEv2-PLAT-2: (35): peer auth method set to: 1

%ASA-7-711001: IKEv2-PROTO-5: (35): SM Trace-> SA: I_SPI=03542332E12C42F4 R_SPI=3E95373B6C8C25AF (I) MsgID = 00000001 CurState: I_PROC_AUTH Event: EV_CHK_IF_PEER_CERT_NEEDS_TO_BE_FETCHED_FOR_PROF_SEL

%ASA-7-711001: IKEv2-PROTO-5: (35): SM Trace-> SA: I_SPI=03542332E12C42F4 R_SPI=3E95373B6C8C25AF (I) MsgID = 00000001 CurState: I_PROC_AUTH Event: EV_GET_POLICY_BY_PEERID

Notice that after the EV_PROC_MSG event there is no re-transmit event — in the logs I could see that eventually (after checking revocation of the certificate, etc.) the state machine leaves the I_PROC_AUTH state and the connection finally establishes.

The strongSwan configuration that caused the above problem was specifying “leftid” in /etc/ipsec.conf, i.e.

conn net-net

leftcert=rpi.pem

leftsubnet=10.123.1.0/24

leftid="C=US, ST=CA, L=SF, O=Acme, OU=CSS, CN=myid, E=admin@example.com"

leftfirewall=yes

right=10.122.109.113

rightid="C=US, ST=CA, L=SF, O=Acme, OU=CSS, CN=asa, E=admin@example.com"

If leftid is removed, and strongSwan is left to automatically detect the identity to send to the Cisco ASA/FTD then the problem does not occur. I think it is because it does not create the ID from scratch but instead extracts it from the identity certificate.

Here’s a diff of the output from “openssl asn1” for the case of the IKEv2 ID using an ASN.1 binary encoding that has “UTF8STRING” fields, and for the case where “PRINTABLESTRING” are used:

15:d=1 hl=2 l= 11 cons: SET

17:d=2 hl=2 l= 9 cons: SEQUENCE

19:d=3 hl=2 l= 3 prim: OBJECT :stateOrProvinceName

- 24:d=3 hl=2 l= 2 prim: UTF8STRING :CA

+ 24:d=3 hl=2 l= 2 prim: PRINTABLESTRING :CA

28:d=1 hl=2 l= 12 cons: SET

30:d=2 hl=2 l= 10 cons: SEQUENCE

32:d=3 hl=2 l= 3 prim: OBJECT :localityName

- 37:d=3 hl=2 l= 3 prim: UTF8STRING :SF

+ 37:d=3 hl=2 l= 3 prim: PRINTABLESTRING :SF

42:d=1 hl=2 l= 14 cons: SET

44:d=2 hl=2 l= 12 cons: SEQUENCE

46:d=3 hl=2 l= 3 prim: OBJECT :organizationName

- 51:d=3 hl=2 l= 5 prim: UTF8STRING :Acme

+ 51:d=3 hl=2 l= 5 prim: PRINTABLESTRING :Acme

58:d=1 hl=2 l= 12 cons: SET

60:d=2 hl=2 l= 10 cons: SEQUENCE

62:d=3 hl=2 l= 3 prim: OBJECT :organizationalUnitName

- 67:d=3 hl=2 l= 3 prim: UTF8STRING :CSS

+ 67:d=3 hl=2 l= 3 prim: PRINTABLESTRING :CSS

72:d=1 hl=2 l= 12 cons: SET

74:d=2 hl=2 l= 10 cons: SEQUENCE

76:d=3 hl=2 l= 3 prim: OBJECT :commonName

- 81:d=3 hl=2 l= 3 prim: UTF8STRING :rpi

+ 81:d=3 hl=2 l= 3 prim: PRINTABLESTRING :rpi

86:d=1 hl=2 l= 32 cons: SET

88:d=2 hl=2 l= 30 cons: SEQUENCE

90:d=3 hl=2 l= 9 prim: OBJECT :emailAddress

Workaround for this issue: Do not use leftid and let strongSwan figure out the IKEv2 ID that it needs to present to the Cisco ASA/FTD.

Multiple Traffic Selectors Under Same Child SA

If the strongSwan configuration specifies multiple networks in one traffic selector, like in this configuration:

children {

# CHILD_SA configuration sub-section.

lab-vpn {

# Local traffic selectors to include in CHILD_SA.

# local_ts = dynamic

local_ts = 10.123.1.0/24, 10.10.0.0/16

# Remote selectors to include in CHILD_SA.

# remote_ts = dynamic

remote_ts = 10.123.0.0/24

}

}

then the Cisco device will receive a TSi and TSr payloads in an IKEv2 message that look like these:

TSi Next payload: TSr, reserved: 0x0, length: 56

Num of TSs: 3, reserved 0x0, reserved 0x0

TS type: TS_IPV4_ADDR_RANGE, proto id: 1, length: 16

start port: 2048, end port: 2048

start addr: 10.123.1.2, end addr: 10.123.1.2

TS type: TS_IPV4_ADDR_RANGE, proto id: 0, length: 16

start port: 0, end port: 65535

start addr: 10.123.1.0, end addr: 10.123.1.255

TS type: TS_IPV4_ADDR_RANGE, proto id: 0, length: 16

start port: 0, end port: 65535

start addr: 10.10.0.0, end addr: 10.10.255.255

TSr Next payload: NOTIFY, reserved: 0x0, length: 40

Num of TSs: 2, reserved 0x0, reserved 0x0

TS type: TS_IPV4_ADDR_RANGE, proto id: 1, length: 16

start port: 2048, end port: 2048

start addr: 10.123.0.5, end addr: 10.123.0.5

TS type: TS_IPV4_ADDR_RANGE, proto id: 0, length: 16

start port: 0, end port: 65535

start addr: 10.123.0.0, end addr: 10.123.0.255

As can be seen, the TSi payload contains multiple Traffic Selectors (one for 10.123.1.0/24 and another one for 10.10.0.0/16). This is based on the strongSwan configuration “local_ts = 10.123.1.0/24, 10.10.0.0/16”.

The idea is that the IPsec gateway that strongSwan is talking to should create IPsec Security Associations (SAs) for 10.123.1.0/24 <-> 10.123.0.0 and for 10.10.0.0/16 <-> 10.123.0.0.

Unfortunately, Cisco devices do not support this and instead only create SAs for the first traffic selector in the IKE message. There is a Cisco bug for this issue on Cisco ASA, but it does not appear that it will be fixed any time soon (as of May 2018):

CSCue42170 (“IKEv2: Support Multi Selector under the same child SA”)

strongSwan users have reported the problem:

https://wiki.strongswan.org/issues/758

A workaround has been proposed here. The workaround consists of creating multiple connections, one for each protect netowrk, instead of one connection with multiple protected networks.

Static CRL Revocation Check No Longer Works

If you had configuration like this before ASA 9.13.1:

crypto ca trustpoint <trustpoint>

crl configure

policy static

url 1 http://x

then that will no longer work as the “url” command has been removed in ASA Software version 9.13.1 and later. The new way to configure the same thing is:

crypto ca certificate map <map> 10

issuer-name attr cn co <cn> issuing

issuer-name attr dc eq <dc>

crypto ca trustpoint <trustpoint>

match certificate <map> override cdp 1 url http://x

crl configure

policy static

but it requires ASA versions 9.13.1.12 or later, or 9.14.1.12 or later.

This is tracked by Cisco bug ID CSCvu05216 (“cert map to specify CRL CDP Override does not allow backup entries”).

The removal of the “url” command is documented in the ASA Software 9.13 release notes under “Important Notes”:

https://www.cisco.com/c/en/us/td/docs/security/asa/asa913/release/notes/asarn913.html#reference_yw3_ngz_vhb

“Removal of CRL Distribution Point commands—The static CDP URL configuration commands, namely crypto-ca-trustpoint crl and crl url were removed with other related logic.

Note: The CDP URL configuration option was restored later (refer CSCvu05216).”

Conclusion

A site-to-site IPsec-based VPN tunnel between Cisco ASA/FTD and strongSwan running on Linux and using certificates for authentication comes up just fine but I ran into the three issues described above. All issues have reasonable workarounds. They are probably bugs that I’ll try to report to the respective parties.